It’s been a few months since the last post. A busy start to 2026 — a lot happening at Dentally, and the smallholding demanding its usual spring attention. Between the two, I’ve been thinking a lot about how leaders spend their time, where leverage really comes from, and how AI can help with the unglamorous but high‑impact parts of the job.

This post covers both.

Using AI to be a more productive leader

The two things taking up some of my engineering time outside of the day job have been AI-assisted tooling and competency frameworks.

An important aspect of being a leader is shaping effective teams and knowing how best to use your time to have impact. End of year reviews and building career development plans can take time but can be extremely impactful on helping shape expectations and setting clear goals.

On the frameworks side: I’ve worked closely with the people team building out a competency framework to set clear expectations for engineers and leaders at Dentally. What does good look like at each level? What are the behaviours and outcomes we’re hiring and promoting against? It sounds straightforward but doing it well takes time. Getting it wrong means people either don’t know what’s expected of them, or they game a framework that doesn’t actually measure what matters.

Once I had the frameworks in reasonable shape, I started using AI — specifically connecting MCP services to Jira, Git, and Slack — to pull together career development and end-of-year review documents for team members. Rather than starting from a blank page, I can now generate a grounded first draft: what someone’s been working on, how it maps against the framework, where the gaps are. It still needs human judgement to turn it into something meaningful and fair, but the grunt work is largely done. Minutes, not days.

Extending the theme of understanding how teams and individuals operate I started building a DORA metrics tool in Python. Vibe coding, as it’s being called — using AI-generated code, iterating fast, not worrying about perfection. The output is deliberately simple: static HTML files, generated once a month. No dashboards, no infrastructure, no ongoing maintenance headache. Good enough to surface the insights I need.

I’ve genuinely been surprised by how much you can achieve this way. A working tool with real utility, in hours rather than weeks. It’s not production-grade software. It doesn’t need to be. The point is the insight, not the elegance of the implementation.

Then there’s the day job — setting direction, hiring leaders, handling incidents, and working closely with peers as the use of AI rapidly reshapes how we build and operate software. Currently, the focus is more on communication than tooling: clarifying intent, defining boundaries, and guiding teams through uncertainty during rapid change.

The common thread is the same one that shows up in the side projects — focus on impact, be explicit about trade‑offs, and avoid pretending certainty where there isn’t any.

That tension between speed, assumptions, and rework shows up in surprising places.

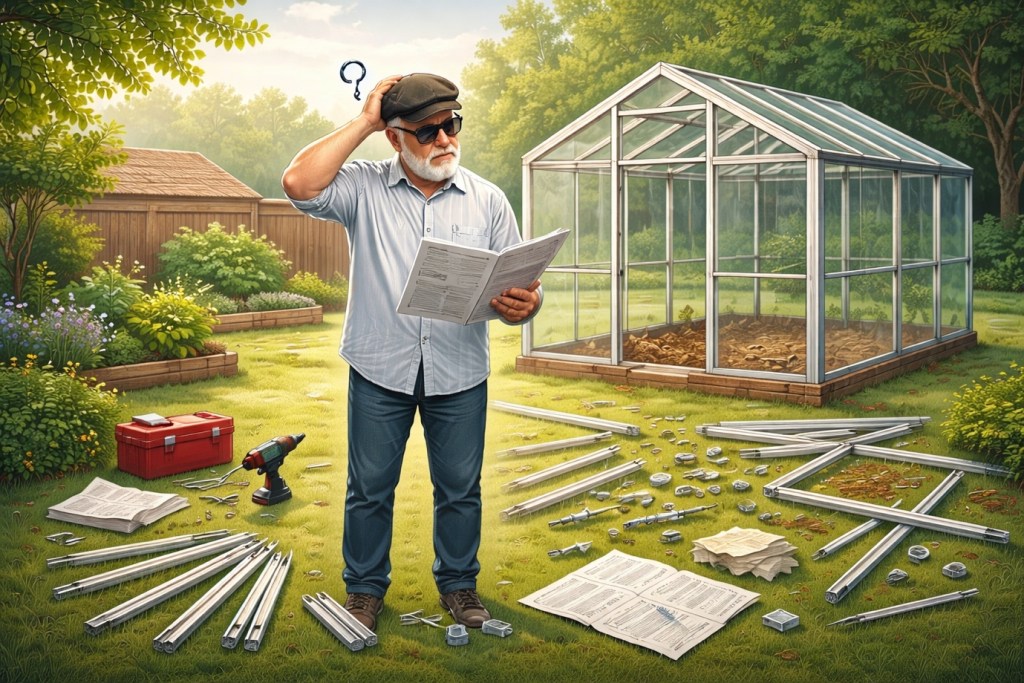

The greenhouse. And the instructions.

At home, spring means the restart of the vegetable garden. New raised beds this year, and a greenhouse — it felt like the right moment to invest in a proper structure rather than bodging something together.

The greenhouse arrived flat-packed. The instructions looked clear. For once, I read them cover to cover before touching anything. One of the key rules: open the boxes in sequence, assemble one before moving to the next. Don’t jump ahead. Don’t improvise.

I did as I was told. It went well, right up until it didn’t.

Buried in box four of five was an appendix — parts and instructions that related back to boxes one and two. Steps I’d already completed. Things I’d need to redo, or at least revisit.

Clear instructions, followed precisely, and still caught out. Not because I cut corners, but because the information I needed wasn’t available when I needed it.

There’s something in that which maps directly onto the AI tooling work. When I’m vibe coding with AI, I give it a prompt, it gives me something plausible, and I build on it. The problem is that the AI doesn’t always tell you upfront what it doesn’t know, or what assumptions it’s made that will matter later. You find out in box four. The code works until you hit the edge case that was always going to be a problem, but wasn’t visible at the start.

The mitigation in both cases is the same: keep the scope small, keep the iterations short, and don’t build too far on top of something you haven’t tested. With the greenhouse, the rework was annoying but manageable. With software, the further you’ve gone before you discover the buried appendix, the more expensive it gets (not a new concept because of AI – just a reminder).

Next year the vegetable garden will be more organised — I’ve got the raised beds in place and the greenhouse standing (correctly assembled, eventually). And the AI tooling will keep evolving. I’m not trying to make it perfect. I’m trying to make it useful.

I’d be curious whether anyone else has hit the same pattern with AI-generated code — that moment where something that looked solid turns out to have a hidden assumption baked in from the start. Leave a comment if so.

Leave a comment